Week 3: Creating a Dynamic World

- Abhijit Baruah

- Jun 15, 2022

- 1 min read

Updated: Jun 16, 2022

When I began this project I envisioned an environment that would be completely dynamic and change during runtime. The first 2 weeks led me to believe that this would be tough to achieve. The main stumbling block was, How do I keep the agent's observation space constant and still find a way to change the environment it is operating in?

To achieve that I had to completely refactor my observation space and the way the world was set it up. The main changes I made were :-

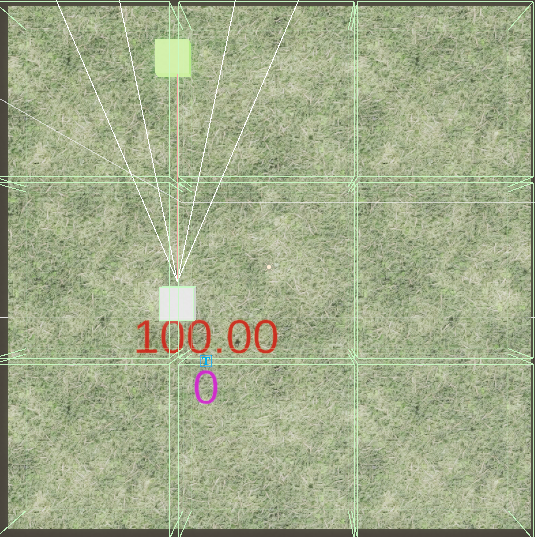

1) Partition my worlds into "zones" where each zone would maintain a bool if food was spawned in it or no, The agent would observe each of these zones, thus removing the notion of "food" / distance to food from the agent's observation space, allowing food to be dynamic.

2) Use raycasts for the agent's observation and use a layer mask to ensure ray hit's with "Food" are the only ones being recorded. These raycasts were added to the observation vector to give the agent an understanding of when it "saw" food.

3)Add an observation to give the agent knowledge of which direction it is facing, i.e. its transform.forward.

These changes made a drastic difference that resulted in my agent completely able to find food that will never have a fixed location , and be completely random and dynamically spawned.

This week yielded a super positive outcome. For the next week I am going to expand the world and agent to respond to other parameters rather than just food.

Comments